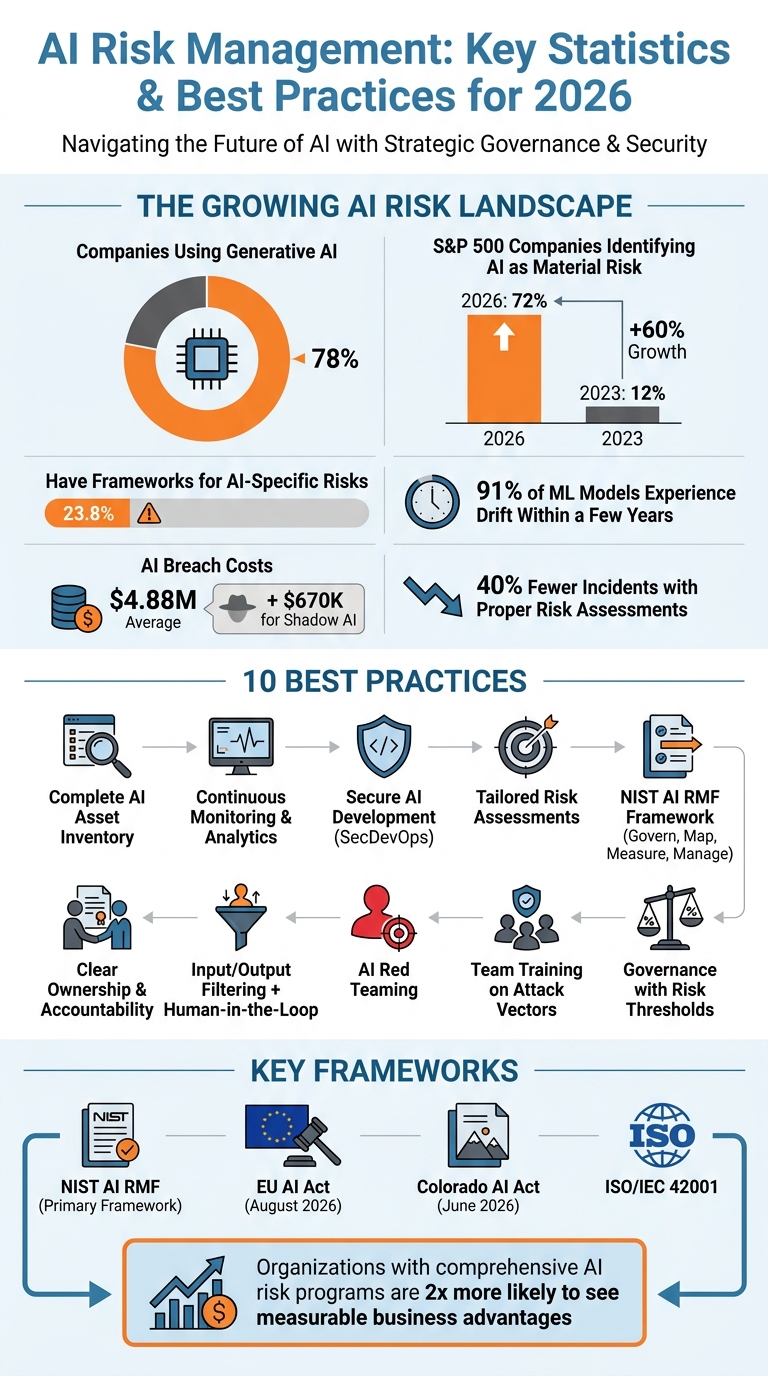

AI is now a cornerstone of business, with 78% of companies using generative AI. But with this growth comes risk: 72% of S&P 500 companies identify AI as a material risk, up from 12% in 2023. Poor governance is a major issue, as only 23.8% of organizations have frameworks to address AI-specific risks.

Key takeaways for reducing AI risks:

- Inventory and Tagging: Start by identifying all AI tools in use, including "Shadow AI" (unapproved tools). This reduces breach costs and builds a foundation for better governance.

- Continuous Monitoring: AI evolves, so track performance, detect model drift, and flag anomalies in real time.

- AI-Specific Risk Assessments: Tailor evaluations to each AI system, addressing risks like bias, data poisoning, or drift.

- Secure Development (SecDevOps): Embed security checks in development pipelines to automate bias testing and ensure compliance.

- Ownership and Accountability: Assign clear roles for AI oversight, from risk owners to governance committees.

- Input/Output Filtering: Use safeguards like human-in-the-loop reviews and filtering to prevent harmful outputs.

- AI Red Teaming: Test AI systems for vulnerabilities, especially autonomous agents, to identify weaknesses before attackers do.

- Team Training: Educate teams on AI-specific attack vectors, like prompt injection or model extraction.

- Governance Frameworks: Implement clear escalation paths and thresholds for risk management, aligned with the NIST AI RMF.

- Regulatory Readiness: Prepare for upcoming regulations like the EU AI Act and Colorado AI Act by conducting annual impact assessments and aligning with compliance frameworks.

Effective AI risk management isn't optional anymore. It reduces incidents by 40%, strengthens compliance, and protects against costly breaches. With regulations tightening and AI risks growing, businesses must act now to secure their AI ecosystems.

AI Risk Management Statistics and Best Practices 2026

Responsible AI Risk Management | NIST AI Risk Framework Explained

sbb-itb-97f6a47

1. Conduct Complete AI Asset Inventory and Tagging

Managing AI effectively starts with knowing exactly what you're working with. Today, the average enterprise uses over 65 unapproved AI tools, and discovery efforts often reveal three to five times more AI systems than initially documented. This issue, often referred to as "Shadow AI", arises when employees adopt tools like generative AI writing assistants, meeting transcription software, or browser extensions without formal approval. The consequences? Organizations with high levels of shadow AI experienced an additional $670,000 in average breach costs in 2026 compared to those with proper oversight. By addressing this gap, businesses can lay the groundwork for better implementation, risk assessment, and proactive management.

Practical Steps for Business Leaders

Kick off a two-week discovery sprint to evaluate AI use across all departments. This process should include reviewing procurement logs, SaaS subscriptions, and API call records. Be thorough - cover everything from in-house models and purchased AI tools to features embedded in existing software like CRM or HR platforms. To catch overlooked tools, use methods like network traffic analysis and browser monitoring. These techniques can identify browser extensions and external API calls that employees might have set up independently.

Tying Inventory to the NIST AI RMF Framework

The "Map" function of the NIST AI RMF highlights the importance of starting with a comprehensive AI system inventory. Once the inventory is complete, categorize each asset by assigning a risk tier (Critical, High, Medium, Low) based on its impact on individuals and the organization. For ongoing monitoring, review critical systems quarterly, high-risk systems semi-annually, and low-risk systems every two years.

Tackling AI-Specific Risks Through Tagging

Tagging assets with detailed data lineage is essential for creating an AI Bill of Materials (AI-BOM). This document tracks data flows, model dependencies, and accountability, helping to identify vulnerabilities like training data poisoning or malicious library injections before they lead to issues. Considering that 91% of machine learning models experience drift within a few years of deployment, setting performance baselines is crucial. Establish drift detection thresholds - for instance, flagging a performance drop of over 5% - to ensure timely intervention.

Assign a dedicated owner to each system to oversee its performance, risk, compliance, and ethical considerations. Maintain a live, regularly updated registry instead of a static spreadsheet, revisiting it every 90 days to account for model updates or retirements. This approach ensures accurate tracking and supports ethical risk assessments, which are vital for subsequent steps like secure development and human oversight.

2. Implement Continuous Monitoring and Behavioral Analytics

After cataloging your AI systems, the next step is keeping a close eye on how they perform in real-world scenarios. Unlike traditional software, AI models don’t remain static - they evolve as they encounter new data. A model that works well at launch might degrade over time, develop biases, or even become vulnerable to attacks, often without obvious warning signs. This is where continuous monitoring steps in, ensuring your systems stay effective and secure.

Alignment with the NIST AI RMF Framework

Once your AI inventory is complete, regular monitoring becomes essential to track system behavior. This process connects the Measure and Manage functions of the NIST AI Risk Management Framework. Specifically, it addresses MEASURE 3 - tracking risks over time - and MANAGE 2, which focuses on managing risks that emerge after deployment. Unlike a one-time evaluation, monitoring is an ongoing effort. With the EU AI Act's high-risk system requirements set to take effect in August 2026, practices like real-time logging and post-market monitoring are no longer optional - they’re mandatory.

Tackling AI-Specific Risks

Behavioral analytics is critical for addressing risks like model drift and adversarial attacks. To detect model drift, monitoring tools should flag statistical changes between live data and training baselines before accuracy starts to drop. For example, you could set triggers for performance declines exceeding 5% or feature distribution shifts beyond 1 standard deviation. On the security side, tracking inputs, outputs, tool calls, and data access in real time can help identify issues like prompt injections or data leaks. Regular audits and compliance checks also make a difference - companies that implement these measures are over three times more likely to see strong returns from generative AI compared to those that don’t.

Scaling for Enterprise AI Systems

For large-scale AI systems, monitoring needs to match the level of risk. Lower-risk applications might only need periodic reviews, such as quarterly check-ins. However, high-risk systems - those affecting hiring decisions, credit approvals, or healthcare outcomes - require real-time dashboards and automated safeguards. Using MLOps observability platforms can streamline this process, automating drift detection and anomaly alerts to reduce manual effort. It’s also important to go beyond aggregate accuracy metrics; monitoring by demographic subgroups can reveal hidden issues that broader metrics might overlook. Palavalli, a data security expert at Forcepoint, emphasizes this point:

"We really need a system that continuously analyzes behavior, automatically applies adaptive risk scoring and enforcement and protects data throughout its entire life cycle."

Practical Steps for Business Leaders

Scalability measures translate directly into actionable oversight strategies. Start by setting clear escalation thresholds. For instance, a detected prompt injection should trigger an immediate incident response, while drift beyond 1 standard deviation might require a detailed review. High-risk models should have automated dashboards, while moderate-risk systems might benefit from weekly reviews. For critical decisions, include human-in-the-loop processes to manually verify low-confidence predictions. The results of monitoring should feed into retraining pipelines and incident response plans, creating a feedback loop to keep systems within acceptable risk levels.

With corporate data inputs into AI tools increasing by 485% between 2023 and 2024, and only 24% of generative AI projects currently incorporating robust security measures, continuous monitoring isn’t just about compliance - it’s a way to gain a competitive edge.

3. Embed Secure AI Development Lifecycle with SecDevOps

Building security into the foundation of AI development is critical, especially as AI risks evolve faster than traditional IT security can keep up. Conventional Model Risk Management (MRM) workflows, which often take 6–12 weeks for review, can't match the speed of modern AI development cycles. This is where SecDevOps steps in. By automating security checks directly within the development pipeline, it allows teams to innovate quickly without sacrificing security standards. This approach not only ensures secure builds and deployments but also complements continuous monitoring for a more risk-aware development process.

Alignment with the NIST AI RMF Framework

SecDevOps integrates seamlessly with the NIST AI RMF by embedding its Govern and Manage functions into your technical workflows. It enables continuous checks for bias, robustness, and accuracy throughout the development process. This aligns with Phase 4 of the NIST framework, which emphasizes incorporating AI risk management into enterprise risk management (ERM) and software development lifecycle (SDLC) practices. For example, the U.S. Treasury's Financial Services AI Risk Management Framework, released in February 2026, translates the NIST guidelines into 230 actionable control objectives.

Effectiveness in Mitigating AI-Specific Risks

SecDevOps addresses AI-specific vulnerabilities by implementing layered defenses at every stage - data operations, model operations, deployment, and platform management. For instance:

- Data Poisoning: Mitigate risks with strict access controls, data quality checks, and minimizing unnecessary data exposure.

- Model Drift: Use continuous monitoring systems that detect statistical shifts between live inputs and training data to prevent performance degradation. Research shows 91% of machine learning models experience drift within a few years of deployment.

- Adversarial Manipulation: Introduce "circuit breaker" thresholds that automatically pull models from production when significant drift or manipulation is detected.

These measures create a proactive defense mechanism, ensuring AI systems remain reliable and secure over time.

Scalability for Enterprise-Level AI Systems

Scaling AI security becomes increasingly challenging as usage grows. For example, while 77% of employees use generative AI at work, only 28% of businesses have clear policies governing its use. This "Shadow AI" phenomenon introduces serious security gaps. SecDevOps addresses these challenges by extending oversight to all AI assets, including those operating outside formal policies.

Instead of relying on large periodic updates, implement incremental retraining pipelines with automated gates for bias and safety testing. Automating tools like Model Cards and AI Bills of Materials (AI-BOMs) within CI/CD pipelines ensures ongoing transparency. These measures are essential, especially considering that AI-related data breaches cost an average of $4.88 million, with Shadow AI-related breaches adding an extra $670,000 to the bill.

Practicality in Implementation for Business Leaders

To make these security measures actionable, organizations should clearly define roles and responsibilities. A RACI matrix can help assign duties to model owners, validators, and risk reviewers, meeting the NIST framework's Govern 2.1 requirement. Additionally, human-in-the-loop (HITL) workflows for model promotion ensure that automated retraining does not introduce unintended risks like bias or compromised models.

A practical implementation timeline could look like this:

- 1–6 weeks: Assign an AI risk owner (e.g., CAIO or CRO) and establish escalation paths.

- 3–6 months: Automate bias and robustness checks.

- 5–9 months: Deploy MLOps systems for drift detection and incident response.

Organizations that conduct thorough AI risk assessments report 40% fewer AI-related incidents, demonstrating the tangible benefits of a well-structured SecDevOps approach.

4. Perform Tailored AI-Specific Risk Assessments

Standard risk assessments don't cut it when it comes to AI systems. AI models behave unpredictably, so assessments need to be customized. These tailored evaluations consider the specific context of each AI system - its purpose, the data it relies on, the people it impacts, and the potential risks involved.

Alignment with NIST AI RMF Framework

Custom assessments align with the NIST AI RMF framework by addressing its Govern, Map, Measure, and Manage functions. Here’s how it works:

- The Map function requires documenting the AI system’s intended purpose, deployment environment, and its impact on stakeholders. This step ensures you don’t dive into technical metrics without first understanding the broader context.

- The Measure function translates risks into 13 evaluation dimensions, such as fairness, accuracy, safety, and explainability.

- The Manage function determines how to handle identified risks - whether by mitigating, transferring, avoiding, or accepting them.

For organizations using Large Language Models (LLMs), the NIST AI 600-1 (Generative AI Profile) offers more than 200 actions to manage risks like hallucinations and prompt injection. An example of this in action is the U.S. Treasury’s Financial Services AI Risk Management Framework, which adapts NIST guidelines into 230 actionable control objectives. Since AI behavior can shift due to changes in data or environment, these assessments should be iterative.

Effectiveness in Mitigating AI-Specific Risks

Tailored assessments directly tackle common AI vulnerabilities. For instance:

- Model drift impacts 91% of machine learning models within a few years of deployment. Effective assessments use statistical divergence measures to detect changes in live input data compared to training baselines. Automated thresholds - like flagging a review if performance drops by over 5% - can catch issues early.

- Data poisoning risks are mitigated through strong data governance, including consistent telemetry auditing and strict access controls.

The gap between AI adoption and governance is glaring: 78% of companies use generative AI, but only 23% have adequate security policies in place. As AI Safety Researcher Eliezer Yudkowsky cautions:

"By far, the greatest danger of Artificial Intelligence is that people conclude too early that they understand it".

Scalability for Enterprise-Level AI Systems

Scaling assessments requires a tiered, risk-based approach. High-risk systems - like those used for hiring, credit decisions, or healthcare - demand thorough assessments, including impact statements and human oversight. Low-risk systems, such as spam filters, can rely on lighter, automated checks.

Organizations can use NIST AI RMF Profiles to adapt the framework to their specific needs. By integrating AI risk assessments into existing Enterprise Risk Management (ERM) and Governance, Risk, and Compliance (GRC) programs, businesses avoid creating isolated processes. Automated tools can streamline risk identification, control scoring, and real-time monitoring, making continuous evaluation possible instead of relying on one-time assessments.

Practicality in Implementation for Business Leaders

The unpredictable nature of AI demands clear, actionable steps for implementing tailored risk assessments. A 90-day roadmap can help:

- First 30 days: Assign an AI risk owner and establish governance responsibilities.

- Next 30 days: Document the system’s purpose, stakeholders, and potential failure modes.

- Final 30 days: Implement monitoring systems, incident response plans, and automated drift detection.

Maintain an AI Risk Profile as a dynamic, audit-ready document. Independent assessors should handle the Measure function to avoid bias, especially if they weren’t involved in building the model. Define triggers for reassessment, such as retraining the model, expanding to new customer segments, or adjusting decision thresholds.

As Dogan Akbulut emphasizes:

"Accepting a residual risk without documented organizational authorization is not risk management. It is risk neglect".

Currently, only 18% of organizations have an enterprise-wide council to oversee responsible AI governance. This is no longer optional - 72% of S&P 500 companies now highlight AI as a material risk in their public disclosures, a sharp rise from just 12% in 2023.

5. Map Risks Using NIST AI RMF Govern, Map, Measure, Manage

The NIST AI RMF framework revolves around four key functions - Govern, Map, Measure, and Manage - to provide continuous oversight throughout the AI lifecycle. This approach acknowledges that AI systems are not static and require ongoing attention rather than one-off evaluations.

Alignment with the NIST AI RMF Framework

Each function serves a specific purpose in managing risks effectively:

- Govern: Lays the groundwork by establishing accountability and fostering a culture that prioritizes risk awareness. This might involve appointing a Chief AI Officer or creating an AI Governance Committee with real authority to make decisions.

- Map: Focuses on understanding the context of AI systems by documenting their purpose, deployment environment, and potential impacts on stakeholders. This step helps uncover vulnerabilities like third-party data poisoning.

- Measure: Relies on quantitative tools such as Test, Evaluation, Verification, and Validation (TEVV) to evaluate risks, including model drift and performance issues.

- Manage: Prioritizes risks and allocates resources to address incidents and recover effectively.

This framework takes a socio-technical view, addressing risks that stem from technical, social, legal, and ethical factors. The increasing recognition of AI as a risk is evident - 56% of Fortune 500 companies flagged AI in their 2025/2026 filings, up from just 9% the year before. With these functions clearly defined, their role in mitigating AI risks becomes apparent.

Effectiveness in Mitigating AI-Specific Risks

The framework addresses vulnerabilities unique to AI systems. For instance:

- Model drift: Detected through statistical tools that monitor changes between live input data and the original training data.

- Data poisoning: Tackled by the Map function, which emphasizes documenting data dependencies and third-party components. The Govern function complements this by enforcing transparency through vendor contracts.

The urgency of these measures is underscored by the rapid rise in corporate AI data usage, which grew by 485% between 2023 and 2024. Shadow AI breaches, in particular, come with steep costs.

As Zscaler aptly puts it:

"Governance without enforcement is just documentation, and documentation does not stop attacks".

By connecting high-level policies with actionable controls, the framework ensures operational enforcement, closing the gap between intent and practice.

Scalability for Enterprise-Level AI Systems

This framework is designed to scale across various organizations and risk levels. High-risk systems, such as those used in credit scoring or healthcare, require comprehensive application of all four functions. Meanwhile, low-risk tools can operate with more streamlined governance. This flexibility makes it suitable for both startups and global enterprises.

Additionally, the framework fosters collaboration by providing a shared language that aligns teams across security, legal, IT, and application development, ensuring consistent risk assessment.

| Function | Purpose | Key Artifacts | Typical Owner |

|---|---|---|---|

| Govern | Set accountability, roles, and policies | AI Inventory, Acceptable Use Policy, RACI Matrix | Chief Risk Officer / AI Committee |

| Map | Identify system context and failure modes | Impact Assessment, Stakeholder Map, Risk Tiering | Product Manager / System Owner |

| Measure | Assess and track risk against metrics | Bias Test Results, Drift Dashboards, Model Cards | Data Science / ML Ops |

| Manage | Execute responses and monitor risk | Incident Response Playbook, Risk Treatment Plan | Security / Legal |

Organizations are moving beyond "documentation theater" to implement enforceable, real-time controls. The framework supports this shift by linking policies with operational tools, ensuring a practical approach to managing risks across diverse AI systems.

Practicality in Implementation for Business Leaders

To implement the framework effectively, a phased 90-day plan is recommended:

- First 30 days: Focus on foundational tasks like creating an AI asset inventory and forming a governance committee.

- Next 30 days: Assess the top five highest-risk systems.

- Final 30 days: Deploy live monitoring tools and incident response playbooks.

A complete AI asset inventory should include applications, browser extensions, API integrations, and embedded SaaS copilots. This step is critical for addressing shadow AI, especially since 68% of organizations have reported data leaks linked to AI tools.

Independent assessments are another essential component. By involving teams not directly responsible for building AI models, organizations can reduce self-certification bias and provide regulators with credible insights. Ensuring technical controls align with organizational policies bridges the gap between theoretical frameworks and practical risk management.

Rebecca Leung, Founder of RiskTemplates, highlights the importance of acting now:

"The gap between 'we have an AI policy' and 'we can survive an examination' is where most organizations are stuck right now. Close it before the examiner asks".

Lastly, updating vendor contracts to require disclosures about AI usage and bias testing methods ensures transparency and accountability from third-party providers.

6. Establish Clear Ownership and Accountability Structures

Without clear ownership, AI governance risks becoming just another policy exercise with no real impact. The NIST AI RMF views accountability as the backbone of its framework. If ownership structures are weak, the entire system - Map, Measure, and Manage - falls apart.

Alignment with the NIST AI RMF Framework

The GOVERN function places the responsibility for AI risk decisions squarely on executive leadership, particularly through GOVERN 2.3. This isn't just a procedural checkbox - it’s a strategic imperative. To ensure clarity, organizations should use a RACI matrix to assign roles across the AI lifecycle, from data ingestion to model retirement.

One key principle? Avoid self-approval. Risk assessments should always be validated by an independent party.

Effectiveness in Mitigating AI-Specific Risks

AI systems come with unique risks, and clear ownership is critical to addressing them. For instance, appointing a Model Risk Owner ensures performance degradation is monitored, with formal reviews triggered when thresholds are breached. Similarly, a Chief Data Officer should oversee data quality, lineage, and integrity for both training and inference datasets, protecting against issues like data poisoning.

The consequences of unclear ownership can be severe. Consider these examples:

- In October 2024, the Consumer Financial Protection Bureau fined Apple Inc. and Goldman Sachs Group, Inc. over $89 million due to governance failures in Apple Card's AI-driven billing and dispute systems.

- In November 2021, Zillow shut down its "iBuying" division after its AI pricing algorithm failed to catch valuation errors, leading to a $569 million write-down and a 25% workforce reduction.

These cases underscore how clear ownership not only mitigates risks but also supports scalable governance for enterprise AI systems.

Scalability for Enterprise-Level AI Systems

For large organizations, the lack of a dedicated AI risk owner often leads to chaos. AI deployment metrics are nine times less likely to have a named owner compared to traditional KPIs. Shockingly, only 16.9% of strategic measures across organizations have an explicit owner, despite 80% of Fortune 500 companies using AI. Even worse, only 25% of these companies have governance frameworks that can keep up with their AI adoption.

To scale governance effectively, every AI system should have a named human owner (and a backup). Relying on vague teams to take responsibility simply doesn’t work. Establishing an AI Governance Committee with decision-making authority is another critical step. Yet, only 18% of organizations currently have such a council.

| Governance Role | Primary Responsibility | NIST Mapping |

|---|---|---|

| AI Governance Committee | Decision authority, policy setting, and escalation oversight | GOVERN 2.1 |

| Chief Risk Officer (CRO) | AI-specific acceptable use policies and risk classification | GOVERN 1.2 |

| AI Project Risk Lead | Maintaining project-level risk registers and documentation | GOVERN 2.3 |

| Independent Validator | Conducting an independent challenge of model performance and bias | MEASURE 1.3 |

| MLOps Team | Continuous monitoring for drift and performance degradation | MANAGE 4.1 |

Practicality in Implementation for Business Leaders

Once ownership and accountability structures are defined, the challenge is implementing them effectively. Start with a 90-day plan:

- Designate AI risk owners, establish a governance charter, and inventory existing AI systems.

- Define escalation thresholds for board-level reporting, such as a 5% drop in performance or evidence of bias.

Vendor contracts should also be reviewed and updated to mandate transparency around AI use and model testing. Instead of creating isolated silos, integrate AI governance with existing enterprise risk management (ERM) and governance, risk, and compliance (GRC) frameworks. This ensures consistency and avoids redundancy.

"Someone has to own this. Not 'everyone is responsible' - that's code for 'no one is responsible.'"

– Rebecca Leung, RiskTemplates

Accountability must extend to the AI supply chain as well. For example, if you’re using APIs from providers like OpenAI or Anthropic, assign specific owners to monitor third-party failures and develop contingency plans. Procedures for retiring AI systems under GOVERN 1.7 are just as important. Decommissioned models shouldn’t continue calling inactive endpoints or contribute to lingering risks.

7. Deploy Input/Output Filtering and Human-in-the-Loop Reviews

Once accountability is in place, the next focus should be on building operational safeguards. Automated AI systems need strict controls, and this is where input/output filtering and human-in-the-loop (HITL) reviews come into play. From 2023 to 2024, corporate data inputs into AI tools skyrocketed by 485%, with sensitive data in those inputs nearly tripling to 27.4%.

Alignment with NIST AI RMF Framework

These safeguards align closely with the MANAGE function of the NIST AI Risk Management Framework (RMF). For example, MANAGE 4.1 emphasizes the need for post-deployment monitoring, including appeal and override mechanisms. The MAP function requires documenting how human users will interpret outputs (MAP 2.2), while MEASURE 2.9 underscores the importance of making AI outputs understandable for decision-makers.

| NIST Function | Control Mechanism | Operational Purpose |

|---|---|---|

| Map | Context Documentation | Defines how humans will interpret and use AI outputs (MAP 2.2) |

| Measure | Explainability Testing | Ensures model logic is clear enough for human oversight (MEASURE 2.9) |

| Measure | Feedback Loops | Tracks performance drift through user and expert reviews (MEASURE 4.3) |

| Manage | Output Filtering | Reduces risks by screening for harmful or sensitive content |

| Manage | HITL Overrides | Provides a manual "kill switch" or appeal path for unsafe outputs (MANAGE 4.1) |

Effectiveness in Mitigating AI-Specific Risks

Input filtering acts as a protective barrier, blocking threats like prompt injection attacks, jailbreaking attempts, and data poisoning. A multi-layered validation approach - combining allow-lists for expected patterns with machine learning classifiers - can effectively detect such attacks. On the other hand, output filtering scans and removes sensitive information, prevents harmful content, and addresses hallucinations before outputs reach users.

The stakes are immense. AI-related data breaches cost businesses an average of $670,000 more than typical breaches, yet only 24% of generative AI projects currently implement adequate security measures. HITL reviews act as a safety net for critical decisions in sectors like credit, insurance, and employment, where errors can lead to serious financial or emotional harm. Human reviewers should periodically audit AI-driven decisions, document any discrepancies, and use this feedback to improve the model.

Scalability for Enterprise-Level AI Systems

Scaling these safeguards doesn’t mean reviewing every single decision manually - that would be impractical. Instead, businesses can adopt a triage system, where low-risk AI use cases (about 75% of requests) are approved automatically, leaving detailed reviews for high-risk cases. Tools like Guardrails AI or NeMo Guardrails can help automate filtering at scale.

For monitoring model drift, automated thresholds are key. A >5% decline in accuracy should immediately trigger a review. It’s worth noting that 91% of machine learning models experience drift within a few years of deployment. Statistical divergence measures can identify shifts in input data distributions compared to training data before accuracy drops become apparent.

Practicality in Implementation for Business Leaders

Start by setting clear thresholds for when human intervention is needed and defining rollback triggers. Technical safeguards like rate limiting, authentication, and output filtering should be implemented at all endpoints to prevent hallucinations and data leaks. For high-risk scenarios, enforce HITL approval workflows before promoting models to production. This ensures accountability and reduces the risk of severe consequences.

"We really need a system that continuously analyzes behavior, automatically applies adaptive risk scoring and enforcement and protects data throughout its entire life cycle – from discovery and classification to lineage and governance, all the way through to detection and remediation." – Palavalli, Data Security Expert, Forcepoint

Rather than building separate frameworks, integrate these AI-specific controls into existing governance, risk, and compliance (GRC) programs. Establish feedback loops where user complaints and human overrides are logged and used to retrain models, helping to maintain stability and performance over time. These measures not only strengthen AI oversight but also complement broader risk management efforts.

8. Apply AI Red Teaming for Agentic AI Jailbreak Resilience

Once input/output controls and human oversight are in place, the next step is proactive testing. This is where AI red teaming comes in. It's no longer just about testing chatbot responses - it now digs into what autonomous agents are capable of doing. For example, in 2026, researchers tested 55 customer service agents and managed to manipulate them into granting unauthorized refunds and policy exceptions, adding up to a fabricated value of over $10 million. This shift from focusing on individual models to assessing system-level vulnerabilities requires a fresh testing approach. These efforts align closely with the NIST AI RMF measurement and mapping functions.

Alignment with NIST AI RMF Framework

Red teaming plays a key role in the MEASURE function of the NIST AI RMF, particularly subcategories MEASURE 2.5 (Security Testing) and MEASURE 2.6 (Robustness Testing). It also supports the MAP function by identifying known limitations (MAP 1.5) and determining potential system impacts (MAP 1.6). Research highlights the urgency of this work: tailored attacks achieved an 81% success rate, compared to just 11% for baseline defenses. The unpredictable, non-deterministic nature of LLM agents exacerbates these risks. For instance, increasing the number of repeated attempts per task from 1 to 25 raised the average attack success rate from 57% to 80%.

Effectiveness in Mitigating AI-Specific Risks

The process begins with thorough reconnaissance to identify vulnerabilities, including those tied to private data exposure, untrusted content, and external communication - dubbed the "lethal trifecta". In early 2026, researchers exploited a Supabase MCP connection, using indirect prompt injections in customer support messages to trick an LLM assistant into leaking entire database tables. Another example involved Claude's iMessage integration, which attackers manipulated to forge conversation histories and create unlimited Stripe coupons without user awareness.

"AI red teaming is adversarial search, not evaluation. You are not scoring the model - you are trying to break the system." – General Analysis

Scalability for Enterprise-Level AI Systems

To scale red teaming for enterprise systems, a hybrid approach works best. Automated tools like PyRIT or Garak can cover a wide range of scenarios, while human experts bring creativity and depth to edge cases. Organizations should embed red teaming into their CI/CD and MLOps pipelines to ensure continuous testing with every model update or new tool integration. The financial benefits are clear: companies without AI security automation face an average breach cost of $5.52 million, compared to $3.62 million for those that have it - a difference of $1.9 million. Shadow AI tools also increase breach costs by $670,000 on average and add 10 extra days to detection time.

Practicality in Implementation for Business Leaders

Red teaming complements earlier governance and monitoring practices by strengthening systems against advanced threats. Start by creating an AI Bill of Materials (AI-BOM) - a detailed inventory of every model, agent, tool integration, and data dependency. You can't test what you haven't documented. Define threat models tailored to specific attacker profiles, such as external users or compromised data sources, and focus efforts on systems with the highest business impact. Assemble interdisciplinary teams that include ML engineers, security specialists, and domain experts to uncover risks that technical teams might miss. Finally, prioritize findings based on their potential business impact - like unauthorized transactions - rather than the novelty of the exploit.

9. Invest in Team Training on AI Attack Vectors

Red teaming helps identify vulnerabilities, but it's your team that ultimately defends against emerging threats. Unfortunately, there's a major gap here - 60% of organizations report training shortfalls as the biggest obstacle to responsible AI by 2026. Meanwhile, Stanford data shows AI-related security incidents have jumped by 56.4%. Without proper training, teams may miss critical threats. Building on insights from red teaming, focused and thorough training is essential to bridge this gap and enhance AI security.

Alignment with the NIST AI RMF Framework

Clear ownership in risk management is essential, but training is what solidifies these efforts. Comprehensive training supports the GOVERN function of the NIST AI RMF by improving workforce diversity, boosting AI literacy, and fostering a culture that prioritizes risk awareness. Under the EU AI Act, AI literacy is now a mandatory requirement for organizations deploying high-risk systems. Beyond meeting these regulations, shared knowledge strengthens internal controls and audits. Training also enhances the MANAGE function by equipping teams to allocate resources effectively and respond to threats - like model poisoning or adversarial attacks - with agility.

Effectiveness in Mitigating AI-Specific Risks

AI threats are evolving fast. For instance, prompt injection attacks succeed between 50% and 90% when defenses are weak. Even small-scale data poisoning, affecting just 0.001% of a dataset, can compromise entire models. Other risks, such as voice cloning and deepfakes, are being used for executive impersonation, while 68% of organizations have experienced data leaks tied to AI tool usage. Effective training should address a wide range of risks, including prompt injection (direct and indirect), data poisoning, model extraction, adversarial inputs, supply chain attacks, agent exploitation, and synthetic media fraud.

Scalability for Enterprise-Level AI Systems

Training shouldn't stop at technical teams - it needs to include all stakeholders. Legal, compliance, product management, and finance teams must also understand the AI risks relevant to their roles. Shadow AI, or tools used without IT approval, is a major blind spot. Currently, 77% of employees use generative AI at work, yet only 28% of organizations have clear policies in place. Breaches involving Shadow AI cost an average of $670,000 more than standard incidents. A 90-day plan can help scale training effectively: spend the first 30 days on AI inventory and baseline establishment, the next 30 days defining metrics and aligning controls, and the last 30 days deploying monitoring and tabletop exercises.

Practical Implementation for Business Leaders

Business leaders should start by forming an AI Governance Committee with decision-making authority, often led by the CRO, CISO, or Head of Compliance. Develop an AI Incident Response Playbook to prepare for model failures, bias issues, and adversarial attacks. Use the NIST AI RMF Playbook to create role-specific training programs for developers, operators, and senior leadership. To counter risks like voice cloning, implement multi-channel verification for high-risk financial transactions. Additionally, maintain an AI Bill of Materials to track supply chain components, enabling rapid vulnerability management. With eCrime breakout times averaging just 29 minutes - and in some cases as fast as 27 seconds - your team's training can make all the difference in responding quickly and effectively. This well-rounded approach to training not only reduces AI risks but also ensures ethical oversight throughout the AI lifecycle.

10. Codify Governance with Risk Thresholds and Escalation Paths

After establishing accountability structures, the next step is to formalize governance by setting clear risk thresholds and escalation paths. Even the most prepared teams need well-defined guidelines to manage AI risks effectively. Interestingly, 72% of S&P 500 companies now identify AI as a material risk in their public disclosures, a dramatic increase from just 12% in 2023. This highlights a growing concern: many organizations struggle to move from simply having an AI policy to being prepared for regulatory scrutiny. Rebecca Leung, Founder of RiskTemplates, sums it up perfectly:

"The gap between 'we have an AI policy' and 'we can survive an examination' is where most organizations are stuck right now".

By formalizing governance, organizations can bridge this gap, creating a framework that ensures policies translate into actionable practices and effective escalation processes.

Alignment with the NIST AI RMF Framework

To strengthen governance, align with the NIST AI RMF's GOVERN function. This involves several key steps:

- Documenting legal requirements (Govern 1.1)

- Incorporating trustworthy characteristics into policies (Govern 1.2)

- Establishing decommissioning procedures for outdated systems (Govern 1.7)

A crucial element in this framework is distinguishing between risk appetite (the strategic willingness to take risks for potential gains) and risk tolerance (the operational boundaries that cannot be crossed). In February 2026, the U.S. Treasury introduced the Financial Services AI Risk Management Framework (FS AI RMF), which builds on NIST's structure by outlining 230 specific control objectives. This provides financial institutions with a detailed roadmap for implementation.

Effectiveness in Mitigating AI-Specific Risks

Defining clear thresholds can stop small issues from escalating into significant problems. For instance, 91% of machine learning models experience drift within a few years of deployment. To address this, set automated alerts for key thresholds, such as:

- Performance drops exceeding 5%

- Adverse impact ratios falling below 0.8

- Missing data rates above 2%

These alerts can trigger formal reviews, ensuring timely intervention. The NIST AI RMF subcategory MANAGE 2.4 specifically calls for mechanisms to deactivate or adjust AI systems when their performance deviates from intended use. As data security expert Palavalli explains:

"We really need a system that continuously analyzes behavior, automatically applies adaptive risk scoring and enforcement and protects data throughout its entire life cycle".

Scalability for Enterprise-Level AI Systems

Scaling governance for large organizations requires a tiered review structure that balances speed with safety. A four-tier system can help streamline decision-making:

- Self-Service: Handles low-risk, routine requests (about 75% of cases) in just a few hours.

- Trust but Verify: Addresses moderately risky but familiar scenarios (20% of requests) within days.

- Strategic Review: Focuses on high-risk, novel cases (5-15% of requests), which may take weeks or months.

- Prohibited: Instantly rejects applications deemed unacceptable.

Standardized intake forms can automatically route requests to the appropriate tier based on factors like risk level, novelty, and prior experience. This structured approach ensures that governance remains agile while maintaining thorough oversight, complementing continuous monitoring and earlier risk assessment efforts.

Practicality in Implementation for Business Leaders

For effective implementation, business leaders should focus on actionable tools and processes:

- Develop a RACI matrix to clarify roles and responsibilities.

- Create an AI Incident Response Playbook to address high-risk events like model failures, bias findings, or adversarial attacks.

- Set automated alerts for performance drift, as manual quarterly reviews are inadequate for fast-evolving AI risks.

- Require executive approval for any residual risks before deploying high-impact systems.

A 90-day roadmap can help streamline this process:

- First 30 days: Establish foundational elements like charters and policies.

- Next 30 days: Conduct assessments, including inventory and risk tiering.

- Final 30 days: Operationalize governance through monitoring systems and incident response playbooks.

This phased approach ensures that governance is not only comprehensive but also practical and ready for real-world application.

Comparison Table

The table below highlights the key differences between traditional IT risk assessments and those designed specifically for AI systems. These distinctions reveal how the unique nature of AI requires a fundamentally different approach to risk evaluation and management.

| Feature | Standard IT Risk Assessment | AI-Specific Risk Assessment |

|---|---|---|

| System Logic | Fixed output behavior | Probabilistic – outputs follow statistical trends |

| Primary Risks | Data breaches, system downtime, unauthorized access | Model drift, prompt injection, algorithmic bias, hallucinations |

| Risk Nature | Static/Point-in-time evaluation | Dynamic/Evolving (Performance degrades over time) |

| Assessment Frequency | Periodic audits or milestone-based reviews | Continuous lifecycle monitoring |

| Methodology | Vulnerability scanning, penetration testing | Red teaming, fairness testing, explainability evaluation |

| Data Focus | Data at rest and in transit (PII protection) | Training data integrity, poisoning attacks, membership inference |

| Mitigation Focus | Access controls, patching, encryption | Input/output filtering, human-in-the-loop oversight, automated guardrails |

| Framework Alignment | ISO 31000, NIST CSF, GDPR (DPIA) | NIST AI RMF, ISO/IEC 23894, EU AI Act, ISO/IEC 42001 |

| Human Role | System administrator/User | Human-in-the-loop with override authority |

These differences highlight why AI risk assessments require a tailored approach to ensure ethical and effective governance.

One of the most striking contrasts is the shift from fixed to probabilistic systems. Traditional IT systems operate with predictable outputs, but AI systems are inherently dynamic, requiring constant monitoring to track changes in behavior. As Palavalli from Forcepoint explains:

"We really need a system that continuously analyzes behavior, automatically applies adaptive risk scoring and enforcement and protects data throughout its entire life cycle".

The expanded attack surface of AI systems also introduces risks that traditional controls can't address. For instance, AI-specific threats like prompt injection attacks have an alarmingly high success rate of 50-90% on unprotected large language models. Even more concerning, 48% of AI models leak training data through their outputs, exposing vulnerabilities that conventional data protection methods are ill-equipped to handle.

Another critical departure lies in the socio-technical perspective. Traditional assessments often focus on technical vulnerabilities, such as misconfigured firewalls. In contrast, AI-specific frameworks like the NIST AI RMF examine systems through a broader lens, evaluating trustworthiness across seven dimensions: validity/reliability, safety, security/resilience, accountability/transparency, explainability/interpretability, privacy-enhancement, and fairness. This holistic approach is increasingly vital, as 72% of S&P 500 companies now identify AI as a material risk in their public disclosures, a sharp rise from just 12% in 2023.

These insights emphasize the importance of adopting continuous and adaptive risk management strategies to navigate the complexities of AI systems effectively.

Integrating Consulting Expertise via Top Consulting Firms Directory

Creating a solid AI risk management program often demands expertise beyond what internal teams can provide. For instance, while 78% of organizations use generative AI, only 23% have established adequate security policies for it. This disparity highlights the pressing need for external guidance, especially with regulatory deadlines approaching - like the EU AI Act, which takes full effect on August 2, 2026, and Colorado's AI regulations, effective June 30, 2026. Partnering with external experts helps organizations not only meet these regulations but also strengthen their resilience against operational risks.

The Top Consulting Firms Directory (https://allconsultingfirms.com) is a valuable resource for addressing these challenges. Their services include ISO/IEC 42001 implementation, LLM penetration testing for prompt injection vulnerabilities, and Evidence Pack Sprints designed to create audit-ready documentation aligned with frameworks like the NIST AI RMF and EU AI Act. Organizations can also search for consultants based on certifications and expertise in areas such as Agentic AI Security and adversarial machine learning - both critical for addressing AI vulnerabilities.

External consultants bridge the gap between technical teams and executive leadership, ensuring that AI risk governance is both structured and actionable. While only 15% of corporate boards currently receive AI-related metrics, a significant 72% of S&P 500 companies now recognize AI as a material risk in their public disclosures. These specialists help translate complex technical risks into clear, board-level insights and may even provide fractional CISO or CIO services to guide organizations through regulatory inquiries or leadership transitions.

AI-related data breaches are costly, averaging $4.88 million per incident, with Shadow AI adding an extra $670,000 to breach costs. Non-compliance with the EU AI Act can result in fines as high as 7% of global revenue. However, organizations that conduct thorough AI risk assessments report 40% fewer AI-related incidents and achieve faster compliance with regulations. When choosing consulting partners, it’s crucial to select firms that integrate AI risk into existing Enterprise Risk Management (ERM) systems, ensuring alignment with frameworks like the NIST AI RMF or ISO/IEC 42001. By leveraging these consulting services, businesses can effectively combine external expertise with their internal AI risk management strategies.

Conclusion

AI risk management has evolved from a technical concern to a pressing business priority. Today, 72% of S&P 500 companies identify AI as a material risk in their public disclosures, a dramatic increase from just 12% in 2023. Clearly, treating AI governance as an afterthought is no longer an option.

Organizations that adopt the practices discussed earlier can better protect their AI systems and operations. Taking a proactive approach to risk management has been shown to reduce incidents by 40%, while companies with comprehensive Responsible AI programs are twice as likely to see measurable business advantages compared to those with superficial efforts. Strong risk management also helps maintain consumer trust - no small feat when 62% of customers report losing confidence in brands after viral AI missteps.

"By far, the greatest danger of Artificial Intelligence is that people conclude too early that they understand it." - Eliezer Yudkowsky, AI Safety Researcher

One-time audits won't cut it when 91% of machine learning models experience drift within a few years of deployment. Businesses need to move beyond passive documentation and adopt active, ongoing governance. A risk-differentiated triage system can help prioritize resources, addressing low-risk applications efficiently while dedicating expertise to higher-stakes AI systems, such as agentic AI.

With regulatory deadlines looming - like the EU AI Act and Colorado's AI regulations set to take full effect in 2026 - the clock is ticking. Now is the time to establish centralized AI registries, incorporate human-in-the-loop reviews for critical decisions, and build cross-functional accountability frameworks. Whether you rely on in-house teams or external consultants, embedding these practices ensures compliance and positions your organization to thrive in an increasingly AI-driven world.

FAQs

What’s the fastest way to find and control Shadow AI?

The quickest way to tackle Shadow AI is through a well-organized AI risk assessment. This process includes pinpointing risks unique to AI, keeping a close watch on AI systems, and putting governance controls in place. Adhering to proven frameworks and industry best practices helps ensure a comprehensive strategy to address the challenges posed by Shadow AI.

How do we set drift and bias thresholds that trigger action?

To establish drift and bias thresholds, start by pinpointing key risk indicators, such as shifts in model accuracy or changes in data distribution. Next, set measurable thresholds that align with your performance objectives or regulatory requirements. Implement a system for continuous monitoring to quickly identify when these thresholds are exceeded. This approach allows for prompt actions, like conducting model reviews or retraining, to resolve issues before they affect decision-making or compliance.

What should we do first to prepare for the EU AI Act in 2026?

To get started, take stock of all your AI systems and classify them. This process is crucial for determining which systems are subject to the EU AI Act and evaluating their associated risk levels. By doing so, you can clearly define the scope of each system and pinpoint the compliance requirements they need to meet. This foundational step ensures you're prepared for the next stages.